In this blog post, I will briefly cover a few scenarios of what can be easily optimized during the migration process.

Cloud-optimized environment:

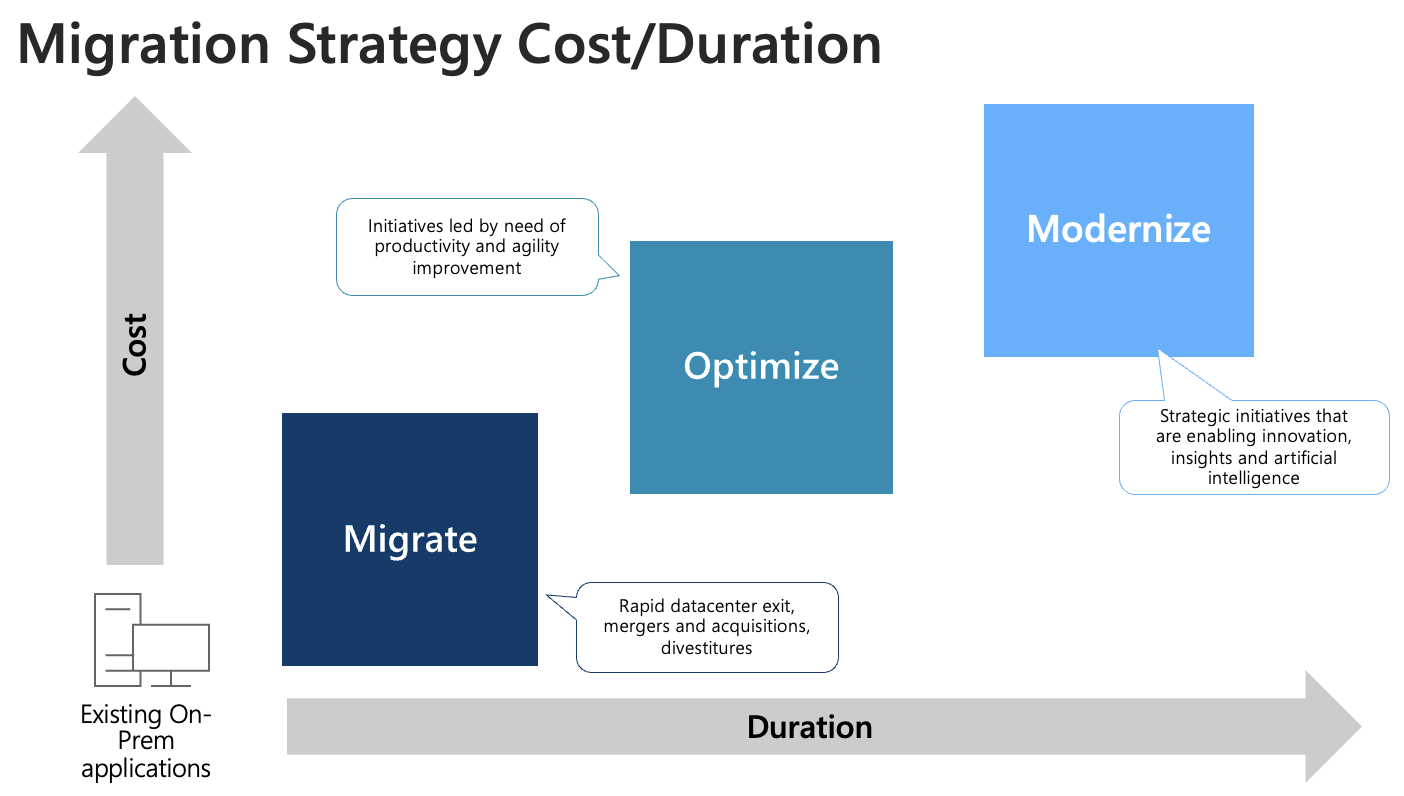

Migrating our services from the on-premises environment to Azure as they are, is not the best way to utilize the power of the cloud. Running everything in servers is the least cost-effective method. Here are different migration types and levels of optimization:

Lift-and-shift

Also referred to as Rehosting is the migration type where we migrate our workload as-is while avoiding as many modifications to the systems as possible. This allows us to perform efficient migration, but our systems will not be taking advantage of cloud technologies. Core technologies used in this scenario are VMs.

Refactor

Refactoring applications involve some changes to the design, but this is mainly related to a service where the application runs and not to the application itself. If the application supports this, this is usually not a very difficult process, but it brings benefits of reducing the management layer and improving the pricing model.

Revise

Revising applications often means that we need to modify applications to support the cloud environment. Sometimes this means version upgrade, but more often, it means we are making changes in the code and the structure to make it work with our new requirements. This migration type can require a significant time investment.

Rebuild

This is where we decide to build a brand new application that will fully leverage cloud-native serverless, Big Data, and AI services. This will reduce the cost of ownership and simplify future development, but it will require a considerable time investment.

Replace / Decommission

Sometimes we can decide to replace our existing applications with SaaS or existing PaaS services and decommission them before moving them to Azure. This can also be a quick migration method depending on the application complexity and user involvement.

To simplify your decision, check out Compute Decision Tree:

For more information, refer to THIS documentation.

Migrate now, optimize later

It is not often that we set to optimize our services before moving them to Azure. Companies usually don’t have time to re-write their applications or migrate from services that can’t be optimized. This is because stakeholders want to see the results quickly and avoid paying for on-premises data centers parallel with Azure. Or, more often, this time constraint is simply because we tied our migration with an event, such as the end of contract or datacenter exit. And sometimes, optimization can require a significant initial investment that simply doesn’t fit the current budget.

That is why companies migrate everything as-is and optimize later. This is usually the fastest and easiest way to migrate and allows Azure administrators more time to familiarize themselves with the Azure environment and tools. However, doing the migration and then optimization means that we have to do some extra work and, in most cases, cause multiple outages.

Optimize before or during the migration

The good news is that optimization can sometimes be as easy as migration or even more straightforward. And since we are already performing in-depth analysis and documenting every dependency in our environment, this is also a perfect time to do this. Some services can be replaced by cloud services or simplified.

This list is based on my personal experience of migrating hundreds of servers and services. I found these particular services to be widespread in company environments. The optimization process does not take more time or causes a significantly longer outage when compared to rehost migration method.

Backups / Disaster Recovery

By design, public clouds allow us to use multiple interconnected locations. This makes creating off-site backups and disaster recovery solutions accessible to everyone. Migration to Azure is the best time to optimize our DR and Backup strategies to utilize these new options fully. This is obvious if we use tape backups or some specific hardware, but it should also be considered if we are using software backup solutions. Check if your software offers Azure integration, look at 3rd party solutions at the Azure Marketplace, or use Azure Backups and Azure Site Recovery. In any case, you will end up with a cloud-optimized DR.

High Availability and Scalability

The main selling point of public clouds, in general, is that we have access to “unlimited” resources. This allows us to scale our environment easily and allows us to use redundant resources to achieve higher availability. And in most cases, we don’t need to change anything in our application. We only need to start using these options. There is no better time to plan this optimization than during the initial migration. Take advantage of using multiple cloud regions to be highly available and closer to your customers and load balancing and automatic scaling options for better performance. These decisions should be a big part of cloud design documentation and our migration guide.

SQL Cluster to PaaS

Migrating SQL clusters to Azure is a lot of work. Usually, we need to make changes to our applications. And because copying data can take a long time, we can’t simply turn off production and start copying our existing servers. That is why we need to deploy a brand new SQL cluster, copy data, and then turn off production just for the required time to sync the last changes. However, sometimes our applications fully support migration to one of many SQL PaaS services Azure offers. PaaS is easier to deploy, automatically includes HA, is easier to maintain, and migration is easier. And if we include the fact that the SQL Always-On Cluster requires the SQL Enterprise license, PaaS services are also cheaper.

Scripts running as a Scheduled Tasks

Using scripts to perform various tasks is great. And in Azure, where everything can be scripted and automated, this makes even more sense. However, having a VM to run these scripts is a waste of money and resources in the cloud. Scripts can be easily moved to Azure Functions and Automation Accounts services. If you need to use a specific OS or install some special modules, DLLs, or anything like that, you can always deploy that in Azure DevOps. Moving scripts from VM to PaaS will make it cheaper (or even free) and easier to maintain. And also get built-in logging, HA, notifications, and seamless integration with other Azure services, such as Key Vault or Azure Monitor.

Optimizing VM size

One of the first things we need to do when considering migration to Azure is to analyze our current environment. Based on that, we can estimate the project cost, identify critical applications and services, and identify any potential show-stoppers. This is sometimes a two-stage process. We do it once before project approval and then again later, just before the project starts, more in-depth. In any case, at the beginning of the Azure migration, we should have all information about our workloads. And this is a great time to think about what our applications really need and assign them the correct Azure Size and Azure VM family. Azure offers many different types of virtual machines, and this is different from what we used to do in our on-premises environments where we didn’t have this choice. Selecting the correct size and family significantly impacts performance and total cost.

Storage Servers

Moving your local file server to Azure is not the best and cheapest way to share files in Azure. And this is even more true if you need to make it highly available. Azure offers a few file-sharing solutions for different scenarios. PaaS services have built-in high availability, and they are easy to manage. You can use Azure File Shares for your applications and user shares. SQL clusters can utilize Premium File Shares that bring higher performance with low maintenance. You can even use Azure File Sync to keep a local copy of some files that are too large to be accessed over the internet. And the best part is that migration is easier and causes a shorter outage than moving a file server itself.

Also, if you are still mapping network folders to user profiles, try to utilize SharePoint, Teams, and OneDrive instead. This part is not going to be quick and easy, and it will require a project on its own, but it makes user files more secure and accessible remotely.

Monitoring

Moving services to the cloud means that you need to monitor different things in different places. Azure Monitor is proven to be a very useful tool to help you with this. It can easily be combined with your existing monitoring solution. Don’t leave this for later.

Network Segmentation

Moving into a new IP space means that you can start fresh and implement well-designed network segmentation for your resources. This is not easy to do in our existing environments, but a fresh start means we can plan this right. I agree that managing a bunch of subnets with Azure Network Security Groups can become complicated quickly. If you use Azure Firewall or another third-party firewall, all rules will be managed centrally. Don’t miss out on this step during the migration preparation phase. It only takes a little bit of planning if done in time, but it becomes more difficult or completely impossible later.

Protect your web-facing applications

Azure offers many options for securely publishing and load balancing web-facing applications. We can easily implement conditional access policies to block access or require MFA. Not always, but sometimes it is easier to replace existing technology with Azure than to migrate it.

Active Directory Federation Services

On-prem ADFS infrastructure usually contains 4+ servers. Because this is a critical component, our servers need to be highly available and load-balanced. And publically available. Not having to migrate this to Azure is cost and time effective. Instead of that, we can consider replacing ADFS with AAD SSO before the migration starts. If we are already using AAD Connect to sync our accounts to AAD, this becomes even easier. We can utilize tools in AAD to analyze application and user readiness for AAD SSO. Replacing ADFS for some applications, such as Office 365, is as easy as ticking a few checkboxes.

VPN

Do we still need VPN after migration? If you are giving users access to VPN so they can access specific servers or applications, that can be replaced by Azure Bastion or Point-To-Site VPN. You can also consider implementing services such as Azure Virtual Desktop, or Windows 365. In most cases, this is going to be an easy-to-implement solution that can be faster than migration or VPN and Radius servers.

Unfortunately, this doesn’t resolve the need for a device-tunnel VPN in case you need your devices to access services like KMS, AD, CA, ADFS, etc. The solution for this is Intune, Autopilot and AAD joined devices. But that can be a large project for later.

Windows Server Update Services

If you are using a stand-alone WSUS server only to keep your Windows Servers up to date, replacing this service with Azure Update Management should be cheap and easy. Unfortunately, this will not work for client OS.

Management Server

Don’t migrate your old IT management server. You will not need your SAN, backup, switch management, and other tools you used on-premises. If you really need one, create a new multi-session Windows 10 VM in Azure instead. Also consider using a clodu version of Windows Admin Center, and Azure Automanage.

There are many more services that can and should be optimized. In this article, I only focused on common things found in many environments that are as easy to optimize as to migrate.

If you have any suggestions, please let me know on Twitter.

Keep clouding around.

Vukašin Terzić